Unless you’ve been under a rock, and probably, even if you have, you’ve noticed that ‘AI’ is being promoted as the solution to everything from climate change to making tacos. There’s an old joke: how do you know when a politician is lying? Their mouth is moving. Similarly, anytime businesses relentlessly push something, the first question that should come to mind is: how are they trying to make money?

Microsoft, in particular, has, as the saying goes, gone all in rebranding its implementation of OpenAI’s ChatGPT large language model based products as CoPilot, embedded across Microsoft’s catalog. Leaving aside, for the sake of this essay, the question of what so-called AI actually is, (hint: statistics) considering this push, it’s reasonable to ask: what is going on?

Ideology certainly plays a role

That is, the belief (or at least, the assertion) of a loud segment of the tech industry that they are building Artificial General Intelligence – a successor to humanity, genuinely thinking machines

Ideology is an important factor but it’s more useful to place technology firms such as Microsoft back within capitalism in our thinking. This is a way to reject the diversions this sector uses to obscure that fact

To do this, let’s consider Vladimir Lenin’s theory of imperialism as expressed in his essay, ‘Imperialism the highest stage of capitalism’.

In January of 2023, I published an essay to my blog titled, ChatGPT: Super Rentier.

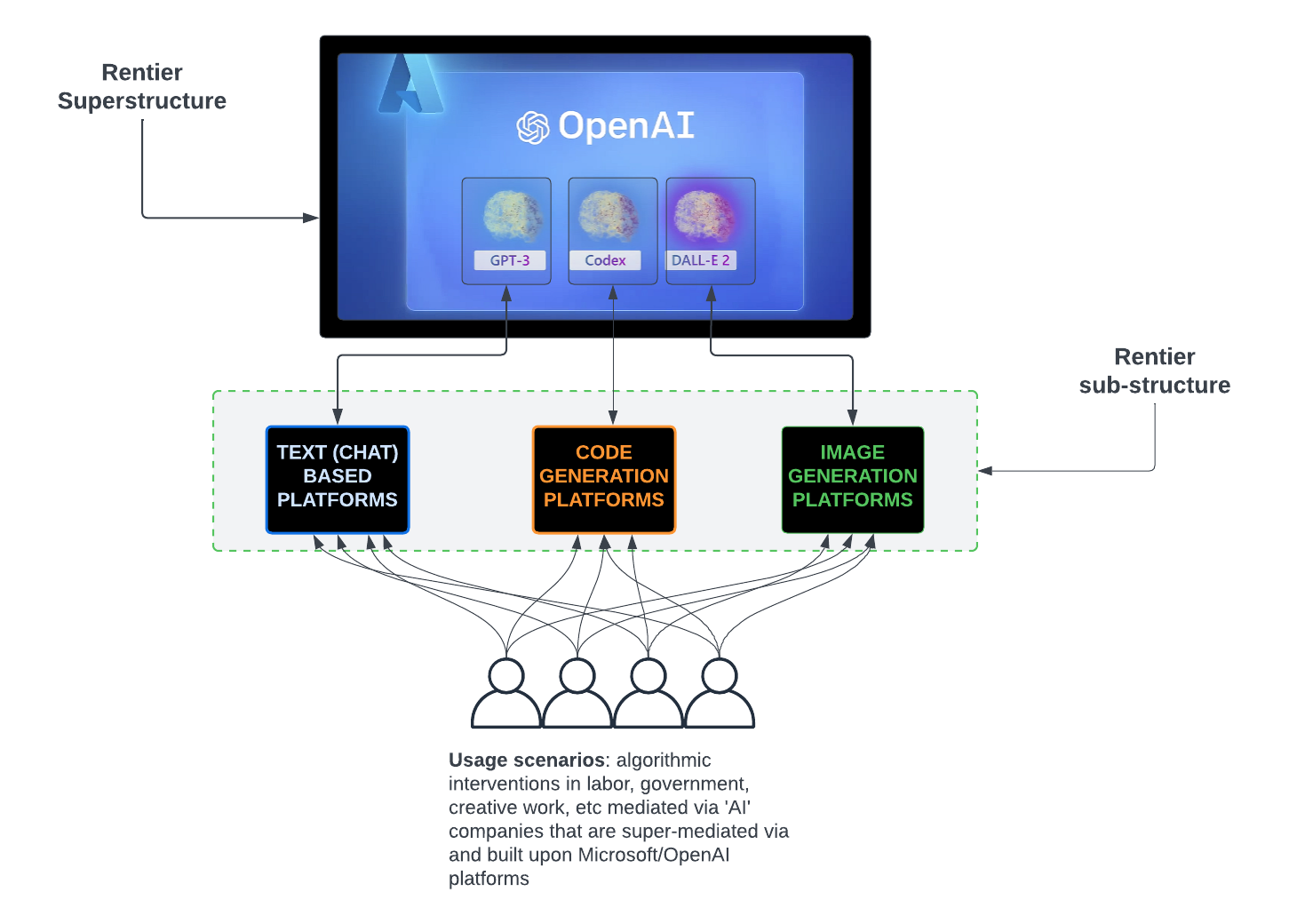

The thesis of that essay is that Microsoft’s partnership with, and investment in, OpenAI and the insertion of OpenAI’s large language model software, known as ChatGPT into Microsoft’s product catalog, was done to create a platform Microsoft would use to make it a kind of super rentier – or, super landlord – of AI systems. Others, sub-rentiers, would build their platforms using Microsoft’s platform as the backend making it the super rentier – the landlord of landlords.

With this in mind, let’s take a look at this visualization of Lenin’s concept of imperialism I cooked up:

For me, the key element is the relationship between the tendency towards monopoly which leads to stagnation (after all, what’s the incentive to stay sharp if you control a market?) and the expansion of capitalist activity to other, weaker territories to temporarily resolve this stagnation – this is the material motive for capitalist imperialism or as Lenin also phrased it, parasitism.

Let’s apply this theory to Microsoft and its push for AI everywhere:

Microsoft, as a software firm, once derived most of its profit from selling products such as SQL Server, Exchange Server and the Office Suite.

This became a near monopoly for Microsoft as it dominated the corporate market for these and other types of what’s known as enterprise applications.

This monopoly led to stagnation – how many different ways can you try to derive profit from Microsoft Office, for example? By stagnation, I don’t mean that Microsoft did not make money or profit from its dominance, but this dominance no longer supported the growth capitalists demand.

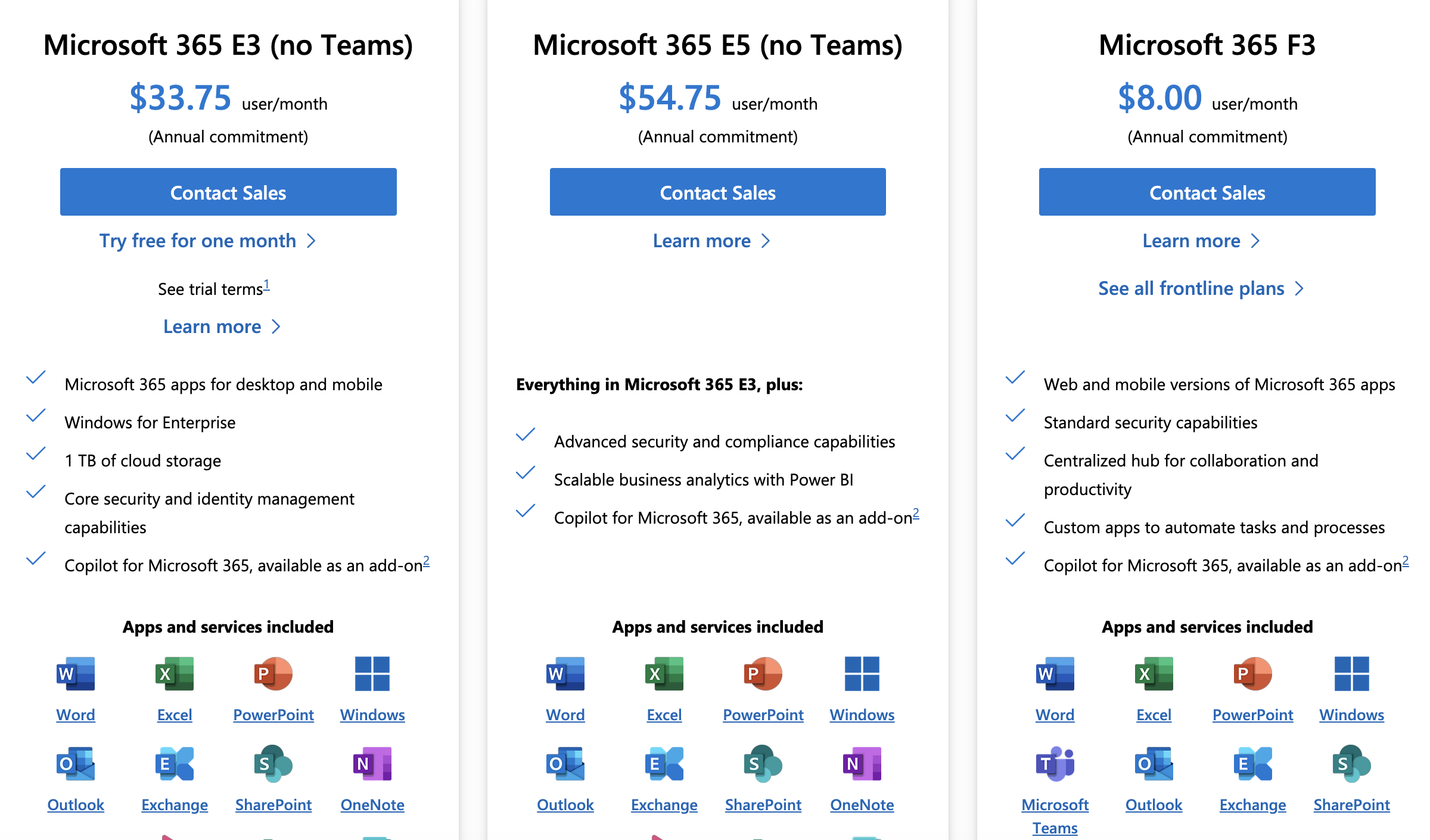

The answer, for a time, was the subscription model of the Microsoft 365 platform which moved corporations from a model in which products such as Exchange would be hosted in-house in corporate data centers and licensed, to one in which there was a recurring charge for access and guaranteed revenue stream for Microsoft.

No longer was it possible for a company to buy a copy of a product and use it even after licensing expired. Now, you have to pay up, routinely, to maintain access.

After a time, even this led to a near monopoly and the return of stagnation as the market for expansion was saturated

Into this situation, enter ‘AI’

By inserting AI – chatbots and image generators into every product and pushing for this to be used by its corporate customers, Microsoft is enacting a form of the imperialist expansion Lenin described – it is a colonization of business process, education, art, filmmaking science and more on an unprecedented scale

But what haunts the AI push is the very stagnation it is supposed to remedy

There is no escape from the stagnation caused by monopoly, only temporary fixes which merely serve to create the conditions for future decay and conflict.

References