This post presents the notes and arguments I used during my interview with hosts Ned and Ethan for the Day Two Cloud podcast (https://daytwocloud.io/). The subject is the need for a public cloud that’s owned by governments as an alternative to the private Cloud Solution Providers – CSPs.

The primary example of why this is needed is provided by an analysis of Amazon which owns both AWS and Amazon retail. My position is that we have abundant evidence that individuals, plus small and medium sized businesses need:

- A computing utility that can be publicly held to account for data mining,

- Will not be used for competitive advantage

- Is an example of zero carbon compute and expands access.

Podcast Link

Listen to the interview here.

Main assertions:

Criticality

IPCC on climate change: https://www.ipcc.ch/

We have entered a critical phase of our history when computing power is broadly needed for both commercial and non-commercial purposes (climate change is the main driver of this urgency but there are other factors). A computing fabric offered as a common utility would empower more individuals and organizations to truly innovate, creating solutions presently out-of-reach due to the unequal access to computing power.

Inequality

Public cloud and emergent technologies such as serverless and cloud hosted machine learning have, in some sense, ‘democratized’ access to capabilities previously only available to deep-pocketed multinationals (if they were available at all). However, the direction and priorities of cloud solution provider platforms such as AWS, Azure and GCP, although perhaps ‘customer driven’ typically do not reflect the longer term needs of the wider societies these businesses operate in. For example, operating at scale on any of these platforms becomes cost prohibitive for capital deprived people and orgs. This situation is only likely to get worse.

Washington Post on Coronavirus’ Impact on Communities without Internet access as example: https://www.washingtonpost.com/technology/2020/03/16/schools-internet-inequality-coronavirus/

The Myth of Private Innovation as Superior to Government Efforts

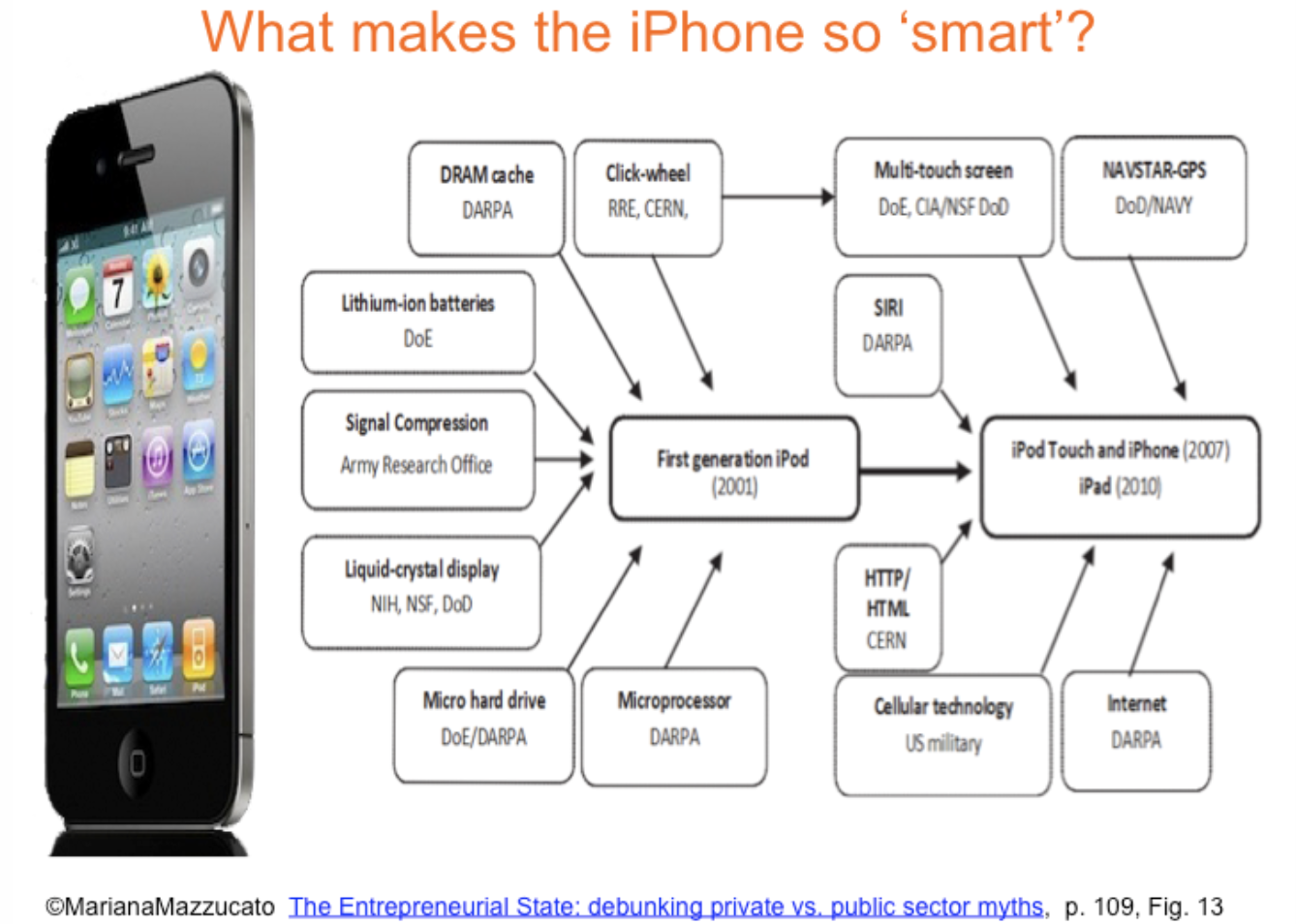

In the United States (and to varying extent elsewhere) there’s a belief that governments cannot perform the sort of work necessary to create a robust, well-maintained cloud infrastructure (or much of anything, really). This notion persists, even though all the elements of the cloud platforms we use started as government initiatives (ARPAnet into Internet, microprocessor development, etc.) My argument is that this is a fallacy that must be challenged, and which only serves the hagiography of business leaders such as Jobs and Musk and prevents us from supporting our interests.

Mariana Mazzucato ably dissects this in her book, ‘THE ENTREPRENEURIAL STATE’

https://marianamazzucato.com/entrepreneurial-state/

As an example, Mazzucato diagrams the government sources of the iPhone’s success:

Additional evidence of the dependence of supposed ‘innovators’ on government can be found in the histories of Tesla and Oracle.

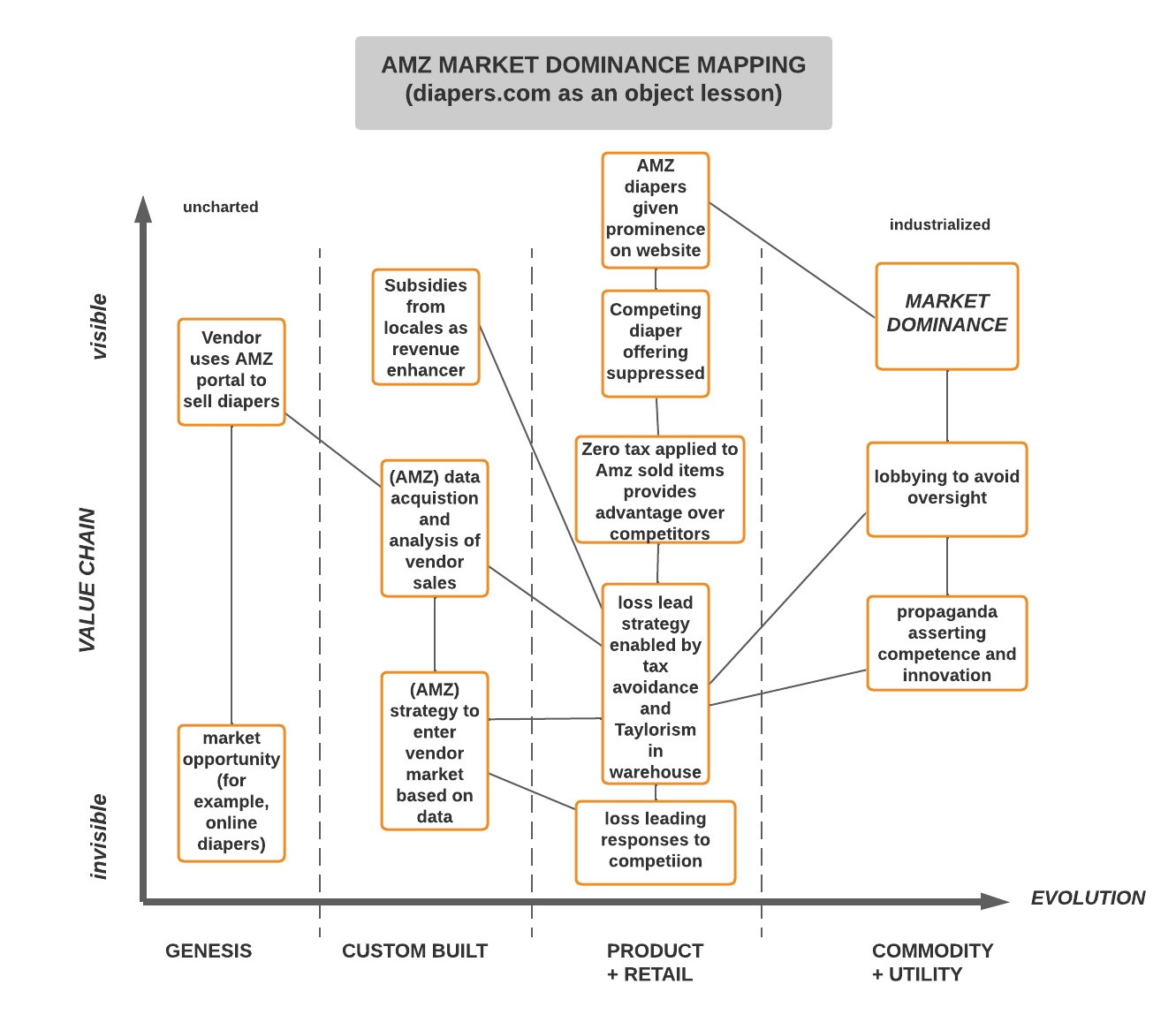

Monopoly Power

The House Hearings on Monopoly Power in the tech industry concluded that, among assessments of other firms (Facebook, Google, Apple) Amazon used the combination of its market position and the computational power of AWS to muscle even the smallest competitors out of their respective markets. The story of Diapers.com was used as an object lesson. Below, I map out the points from the report using a Wardley map:

Ethics

Although technologists typically focus on the instrumental aspects of a technology (note, for example the circular debates about Kubernetes vs. Serverless without the context of purpose) there are always ethical considerations. One of the most acute examples of this is the field of AI and the sub-category of machine learning; algorithms with impacts on people’s lives are being brought online without full regard for the consequences.

This leads to a question: can private firms which stand to obtain profit from deploying and promoting the use of powerful technologies (such as Sagemaker on AWS and Azure ML Studio) be trusted to – alone – seriously and consistently over time build with ethics foremost in mind.

A publicly accountable technology stack would truly democratize this by placing oversight into public hands and make oversight a central part of any platform.

Note the still-unfolding story of AI Ethics researcher Dr. Timnit Gebru’s firing from Google, covered in the story below:

Standing with Dr. Timnit Gebru — #ISupportTimnit #BelieveBlackWomen

The circumstances of Dr. Gebru’s firing begs the question: would she have been fired if Google was truly dedicated to AI Ethics and, if there was a publicly accountable platform the Dr. could have worked on that was as dynamic, would there be any debate over the need for ethics (i.e., would there have been a tension between the goal of building an ethical tech and profit)?

Ethan’s Questions and My Answers

Why should there be such a thing?

The primary benefit of public cloud is its ability to provide computing (and related) resources as a utility. To-date, this has been viewed as a benefit to business (governments are also seen as customers – note the JEDI contract but the business advantage is the primary driver).

This shapes the priorities and structure of the public cloud (for example, the current focus on hybrid workloads primarily speaks to the concerns of enterprises).

What’s missing are platforms that meet societal needs for the advantages of a computing utility. Imagine, for example, a team of researchers studying the effects of climate change on their local community. Access to a public service cloud computing platform would accelerate the pace of such vital work.

For whom would this offering exist?

The offering would exist to serve the requirements of individuals, non-profits, small communities and others who have a need for computing power but do not have the deep pockets of corporations and who want to use a platform whose priorities are democratically determined rather than being driven by profit incentives.

What are the typical adoption drivers for organizations who might like this idea?

Lower runtime cost, public accountability, and stability would be among the primary adoption drivers (no doubt there are others not yet thought of). Consider, for example, the needs of a community college or a low-income community that wants to provide computational resources to students and under-served communities, a public utility cloud could serve these needs.

Does the ridiculous pace of so-called innovation at AWS, et. al. make a presumably slower-moving public cloud a non-starter?

The ‘innovation’ we see from the major cloud solution providers is a function of the unified APIs upon which their services are built. The recently announced AWS SageMaker Pipelines for example, is possible because new services such as CI/CD pipelines to a machine learning fabric is a latent capability that only needs to be engineered for realization (this doesn’t minimize the effort required to create new features).

These innovations are created to address the needs of large-scale business (for example, IoT for manufacturing and cloud-scale machine learning for the hydrocarbon industry).

It is debatable however, whether many of these innovations are important from a broader point of view. In the second decade of the 21st century, we can consider computational power to be a firmly established need of a complex society. But a rapid pace of change in private entities that isn’t tied to a larger catalog of needs has no effect on the usefulness of a public computational fabric.

Also, there’s an assumption that a government managed service would be inferior to private services. My argument is that this isn’t based on evidence but on a bias that’s been woven into our discourse which has only served the interests of private power (for example, we praise SpaceX but fail to celebrate NASA’s decades of achievements).

What am I getting in a publicly owned utility cloud that I would not get from a commercial offering?

- Public control of priorities

- Public control of the pricing models

- The broader distribution of computing power to under-served communities and organizations

- A counter-weight to private power which should not be able to operate unchecked

How should it be funded?

We can imagine a dual funding model of taxation (a dirty word to many, and yet how do we have roads?) and direct pricing. A community college, to return to that example, would both benefit from the public financing of a platform and pay a nominal and scaled run rate for access to services.

Governance model. You mentioned NIST in the [Twitter] thread, but I can see an argument of interested consumers wanting to have input as well. Lots of angles to come at this from that could quickly get unwieldy.

In this model, we move away from the idea of ‘consumers’ (which barely existed in its present form only a few decades ago) and back to that of citizens with a collective interest in a public good. As with other public goods, such as road systems, the fact of public participation does not automatically mean chaos. There are lessons to be learned from effective school boards and consensus based governance. Consider a scenario: Susan has created a cost benefit analysis showing the value of changing the emphasis of her municipality’s computing grid towards serverless. Although techies often pride themselves on how complex and opaque their areas of expertise are, the truth is that if value cannot be clearly stated, the fault is with us. We should have faith that a plurality of people who see a direct benefit from the investment will act in the public interest.

Where would the metal reside? Would existing commercial interests become providers, i.e. adding capacity as demand grows? And how does that align with HIPAA, GDPR, etc.?

Many cities and towns host appropriate locations that are under-utilized or not utilized at all. There are many empty warehouses, former malls, etc that could serve the purpose. There should be no conflict between the requirements of regulatory requirements such as HIPAA and GDPR, which are built upon the idea of guarding privacy, and the hosting of solutions built on a public computing utility. In fact, one could argue that these regulations are more likely to be strictly adhered to by public servants rather than private orgs which seek ways to use data for advertising purposes.

CSPs might be participating service providers but they would have to strictly adhere to regulatory and contractual obligations that would last for decades (longer than the interest horizon of the average business). This would probably act as a disincentive.

If this would be regional, would there be an interconnection of global public clouds beyond simply the Internet? I raise this as Google Cloud, Azure, and AWS all have their global networks.

We can foresee the creation of a standard kit for compute, database and storage with a zero carbon power infrastructure (imagine an abandoned mall retrofitted as a DC, powered by solar and wind according to a common template). These municipal ‘cubes’ could form the basis of a national and then international super-structure. The National Science Foundation Network provides one model that can be updated.

American vs. European perspectives on the government being in the middle of things.

The primary difference between US and European perspectives is that, in the US, many people believe, often without evidence or with anecdotal evidence such as bad experiences at the DMV, that private orgs are better at this sort of ambitious initiative than governments. Many US-ians genuinely believe that Twitter is a cutting edge tech that could only have been produced by a ‘visionary’ leader. This is a fallacy and one that, while it exists in Europe, is not as deeply entrenched (due to people demanding more of their governments and expecting to receive services in return for taxation).